I decided a few months ago that I ought to visit Japan, because I rather enjoy some of their products, and design aesthetic, and romanticize the pastoral living. I have a fantasy about potentially retiring there, so I better visit…

Introduction After starting a meditation practice and keeping with it for a year, I wanted to learn more about any physiological changes that might have occurred. In particular, if I had — or if I could change my practice to…

The reductionist scientists have promised that we can cure aging by studying molecular pathways (nutrient sensing, hormonal signalling, IGF-1), searching for small molecules having large effects on metabolism (semaglutide, rapamycin, etc), and genes with large effects (daf-2 in doubles nematode…

After thinking for a bit about a mechanical device that computes a fake punched card for the 1800s Jaquard loom, I figured it appropriate to first look at mechanical logic done with Legos. Fortunately, some enterprising people have already created…

The other weekend I experience the pleasure and fascination of visiting the Antique Gas and Steam Engine Museum. While there, my friend and I perused the loom room Weaver’s Barn. At first, I’m shocked that people spend time weaving, as…

Corderio launched the day with an exciting whirlwind update of changes in the political landscape, naming a couple massive investments. Followed by SENS Foundation highlighting their ability to maintain focus and organize contributions to advance the field. Kenneth Scott then…

I skipped the Expo talks, as those are mostly infomercials from the vendors. Liz Parrish opened up the day by identifying herself as a pioneer for having taken 4 different gene therapies! She must do this in foreign lands because…

RAADFest opens up like a rock concert. With Bryan Johnson as the keynote speaker. Followed by an infomercial for Brain Tap and whirlwind tour of recent news headlines over the last year collected by Bill Faloon peppered with anecdotes of…

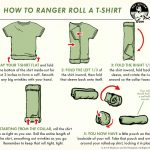

Use the Ranger Roll This is the first step in compacting the clothes so they’ll fit in the luggage. When rolling them up, you can also plan for each day (socks, underwear, shirt) as its own roll! At the end…

Unusually, I’m actually giving feedback to a local government office. All due to some videos I watched about proposed expansion of the system. I’ve chosen _not_ to get a car, even though I could afford one, because investments in the…